Does Google's AI Favor YouTube? We Analyzed 180 Queries Across Three LLMs to Find Out

Updated on

April 7, 2026

Everyone wants to be visible in GEO results. But the best anyone can do is continued educated guesswork…or carrying out their own research to find out once and for all.

And there's a question that's recently been making rounds in SEO and GEO circles: does Google build its AI responses using content on Google-owned platforms like YouTube?

It's a fair one. If Gemini systematically surfaces YouTube above other platforms – and not because it's better, but because it's Google's – that would tell us something important about how AI models are shaped by the companies that built them and the inherent bias that might be present.

Like our other theories, we decided to test it. We analyzed how three leading AI models (Gemini, Claude, and ChatGPT) cite YouTube across different query types and languages. Let's break down what we found.

The Setup

We ran all three models across 60 distinct queries covering four categories: Tutorials, Niche Product Reviews, Technical Popularization, and News Explanation. Each query was run in English, French, and Polish, giving us 180 total data points per model. It also gave us a way to detect whether citation behavior shifts depending on the language of the user.

Methodology note: This study used Gemini 3 Flash, Claude Sonnet 4.6, and GPT-5 mini. These are the latest, fastest models available at the time of testing, and those most commonly used by free-tier accounts across all three platforms.

However, we quickly found that ChatGPT doesn't cite sources spontaneously. By default, ChatGPT doesn’t include links or source citations in its responses unless you explicitly ask it to, unlike Gemini and Claude. This made it a lot more difficult to know if ChatGPT did indeed cite YouTube as a source.

To get comparable data, we added an explicit citation request to the end of each ChatGPT prompt. This created a slight bias in the results (more on that below), but the follow-up testing helped account for it.

Overall Findings: Gemini Cites YouTube Most, But Claude Is Close Behind

Averaged across all query types and languages, Gemini cited YouTube in 21.7% of its responses. Claude came in second at roughly 11%, and ChatGPT was a distant third at around 3%.

So yes, Gemini does cite YouTube significantly more than its competitors. But the picture gets more interesting when you break it down by language.

Query Language Impacts Citations

One of the study's most revealing findings is what happens to YouTube citation rates when the query language changes.

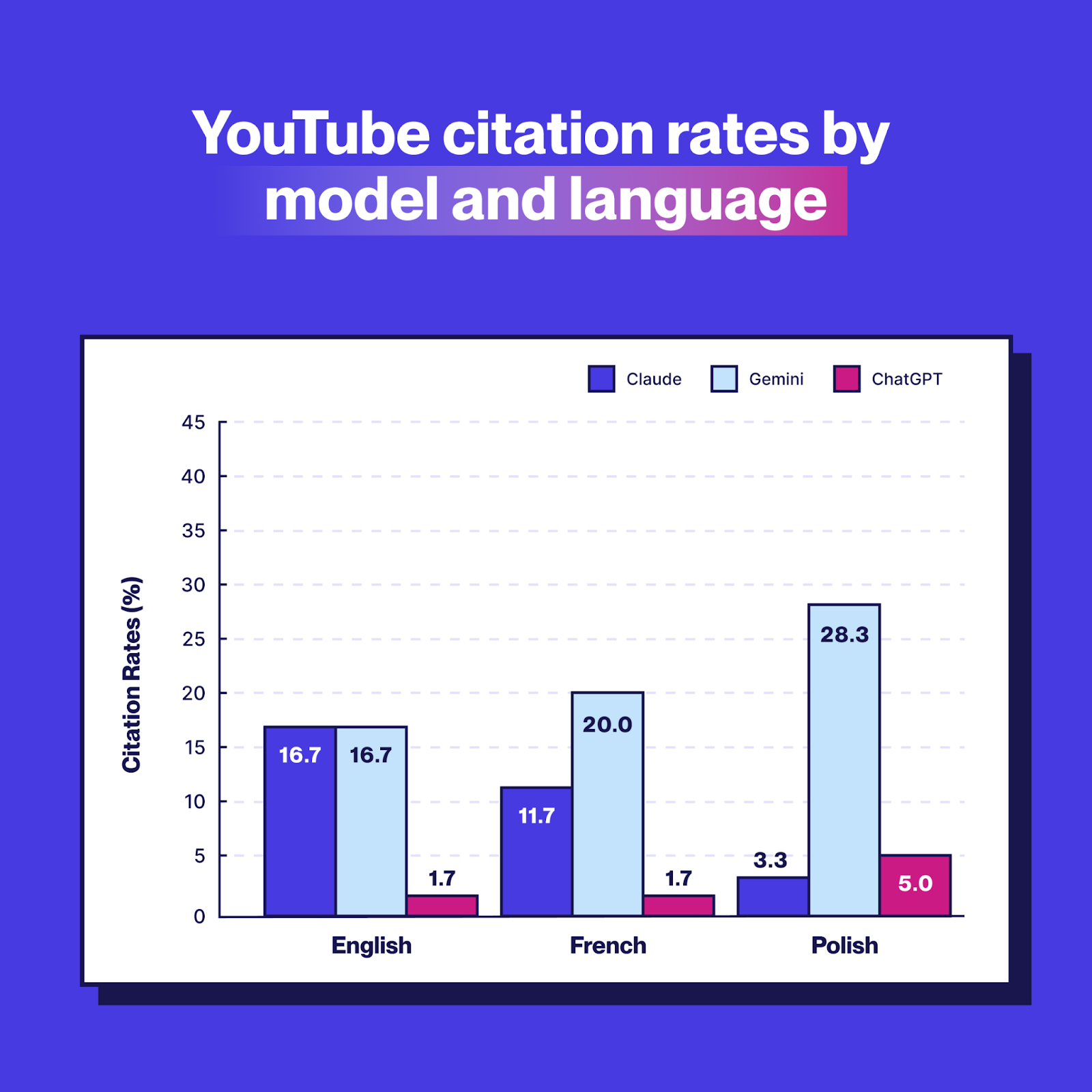

In English, Claude and Gemini cited YouTube at identical rates at 16.7% each. But as queries shifted to French and Polish, their behavior sharply deviated.

Unexpectedly, Gemini's YouTube citations increase in non-English languages. But Claude's decrease. The gap between the two models grows from zero in English to nearly 25 percentage points in Polish.

This large gap is arguably the most significant finding in the study. If Gemini were simply recommending YouTube because it's a genuinely high-quality source for certain query types, you'd expect its behavior to stay relatively stable across languages.

Instead, it leans into YouTube more heavily as queries move into non-English territory, which is the pattern you'd predict if the model had been trained or tuned to favor a property owned by its parent company. It’s important to note that Google has not confirmed this anywhere, but what the research has led us to believe.

It shouldn’t be surprising that AI models have their inherent biases (they’re trained on human-produced datasets and tweaked accordingly, after all), but alas, this seems to be the case with Gemini and YouTube.

Query Type Matters

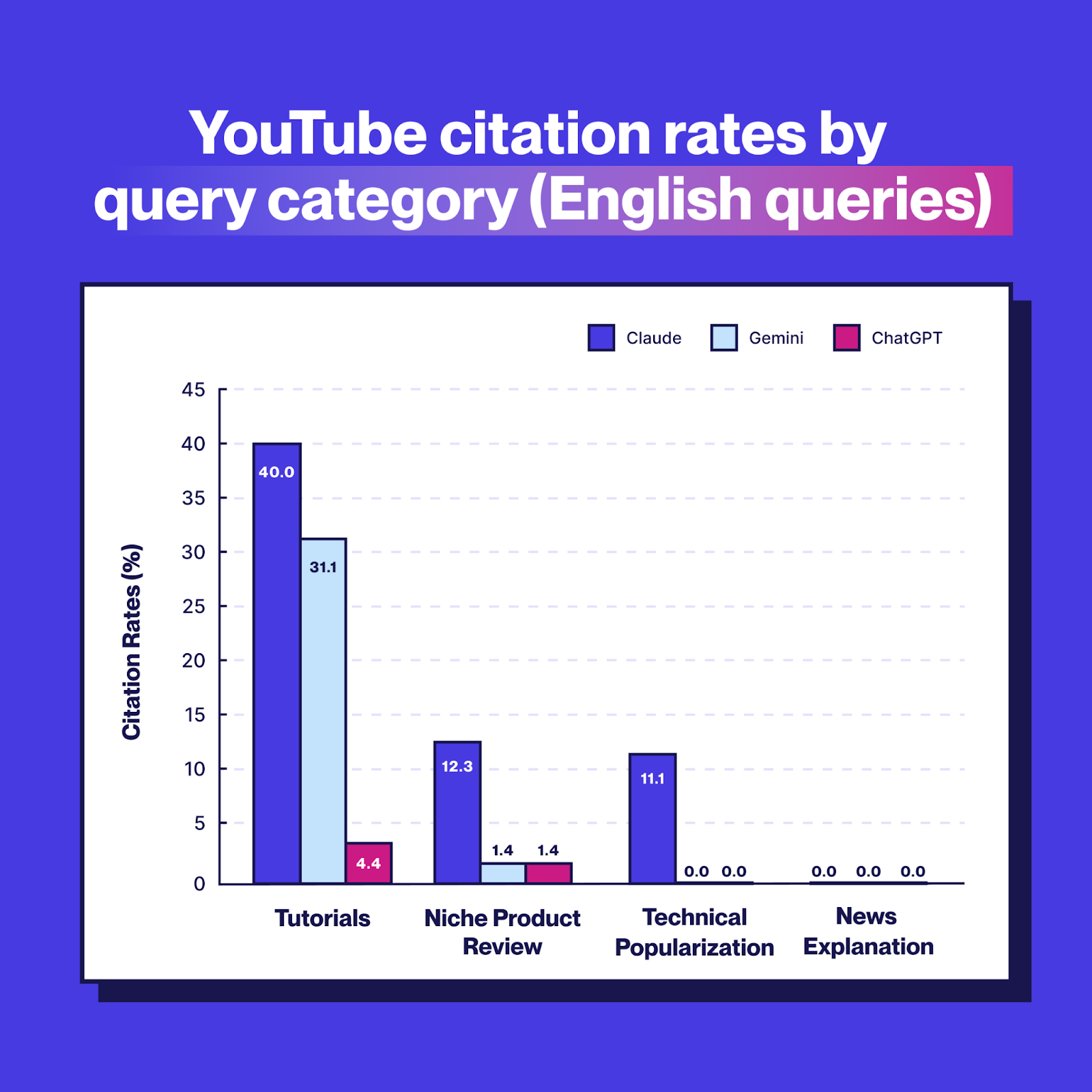

Not all content categories trigger YouTube citations equally.

Tutorials drove the highest YouTube citation rates by far across all models – the platform remains hard to beat for this type of content, while News Explanation queries produced zero YouTube citations across the board. We figure that this may be because it’s far easier and faster to get your news explained to you through AI summaries or simply asking LLMs, for example.

Unsurprisingly, YouTube is genuinely a dominant resource for how-to content, and remains the go-to platform for it. The higher citation rates in the Tutorials category likely reflect real world usage rather than model bias.

So the more diagnostic question is what happens in categories where YouTube's dominance is less clear-cut (recommendations? explainers?) and whether Gemini continues to overindex there.

The Spontaneous vs. Requested Citation Gap

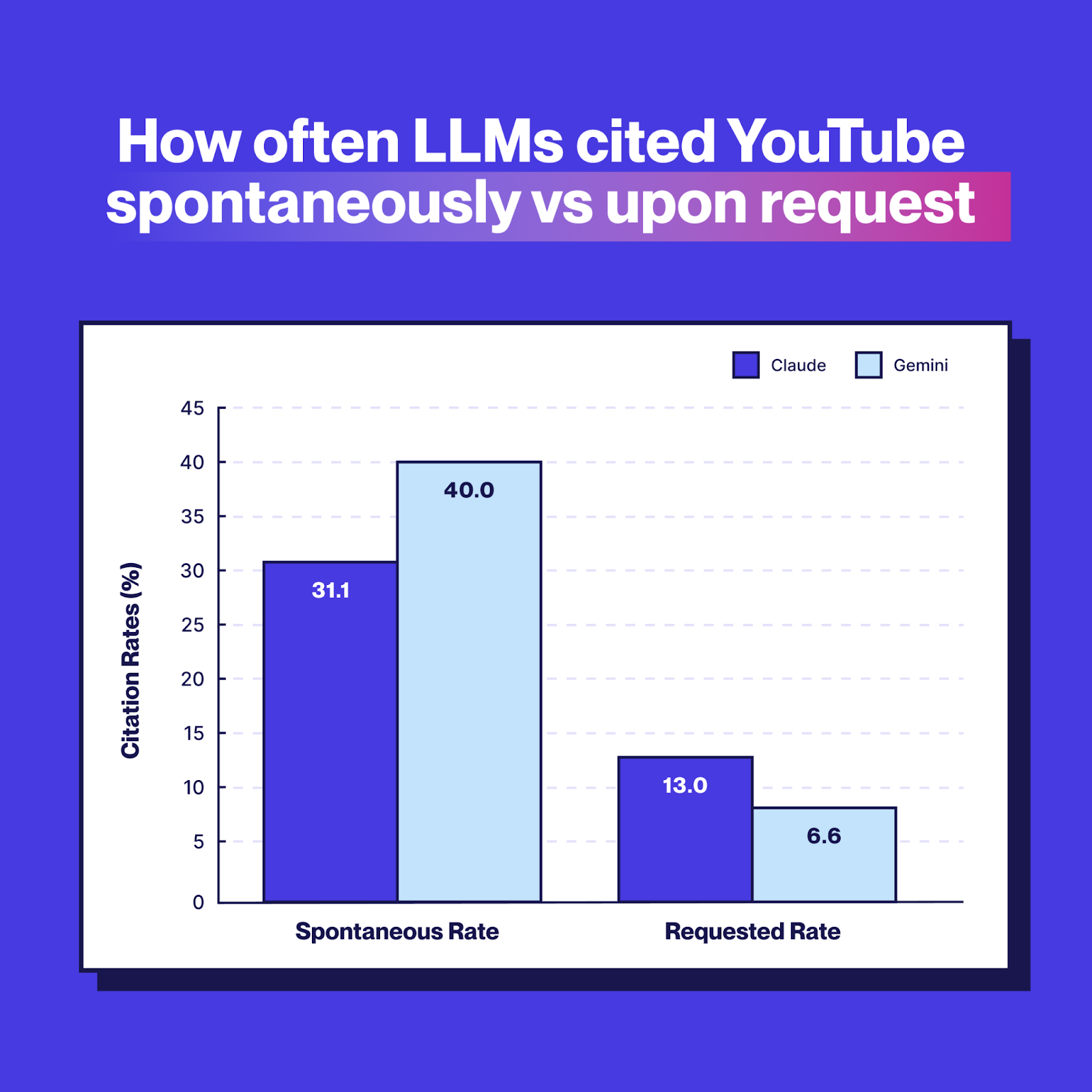

One of the most useful follow-ups was re-running the Tutorial queries for both Claude and Gemini with an explicit citation request, which was the same prompt addition used for ChatGPT.

The results shifted dramatically:

When asked to list sources explicitly, both models cited YouTube far less. This suggests that the high spontaneous rates aren't always reflecting citation in the traditional sense. Rather, Gemini and Claude are often embedding YouTube videos directly into their responses as recommended content, which shows up in citation counts but is functionally different from using YouTube as a source to build an answer.

In other words, it doesn’t draw from YouTube content when generating an answer; instead, it recommends it as ‘further reading’, if you will.

ChatGPT, by contrast, never surfaces YouTube links spontaneously. During manual review, we found just two instances – across 45 Tutorial queries – where ChatGPT offered to search for a video, and it never displayed one unprompted.

Take note: this doesn't mean ChatGPT uses YouTube less to build its answers; it just means it doesn't show its work. Frustrating, as we were hoping this research would shed a bit more light into the mysterious ways ChatGPT works. Though with how rapidly these models evolve and the more people rigorously test them, ChatGPT’s algorithm may become less opaque at some point... but we’re not holding our breath.

Who Gets Cited? Top Domains by Model

Beyond YouTube, and across all languages, the citation landscapes for each model look quite different.

Claude's most cited sources were BBC and Reuters, with YouTube appearing in third, suggesting Claude treats it as a useful but secondary resource.

Gemini, by contrast, placed YouTube as its single most-cited source overall, well ahead of Reuters. In English-only queries, the same pattern held: bbc.com led Claude's top 10, with reuters.com second and youtube.com third, while youtube.com dominated Gemini's rankings.

ChatGPT shows a fundamentally different citation profile. It leans heavily on Wikipedia, Reddit, developer documentation (developer.mozilla.org, docs.docker.com), and Arxiv. It's a more open-web distribution, which may reflect its different architecture and training approach.

If your business doesn’t yet have a Wikipedia page up, this may be your sign. But good luck getting it up, as the website is notoriously rigid in its publication standards (unfortunately, we speak from experience).

What This Means for Marketers

A few practical takeaways from the data:

YouTube remains a high-value platform for AI visibility, especially for tutorial content. If your brand produces educational or how-to video content, YouTube citations from AI models are a real channel worth investing in. Claude ranks YouTube at an average position of 3.6 when it does cite it; Gemini at 4.3. The reigning long-form video titan isn’t going away any time soon, especially for search visibility, despite fierce competition from TikTok.

Gemini's non-English bias toward YouTube is a structural consideration for international content strategies. Brands operating in Polish, French, or other non-English markets should be aware that Gemini may route video-oriented queries toward YouTube more heavily than Claude or ChatGPT would.

Prompt framing changes citation behavior. The fact that both Claude and Gemini cited YouTube far less when explicitly asked to list sources is a useful signal: the spontaneous embedding of YouTube videos is partly a UX feature, but not always a reflection of how the model weighted sources when formulating its answer.

ChatGPT's behavior is harder to read at a surface level. Its low spontaneous citation rate is a methodological artifact, not evidence that it ignores YouTube. Our follow-up analysis suggests ChatGPT may rely on YouTube content to build answers while simply not surfacing links unless asked.

Conclusion

Does Google favor its own products in AI responses? Based on this data, Gemini does cite YouTube substantially more than its competitors, and the pattern intensifies in non-English languages in a way that's hard to explain purely by content quality.

That said, this study analyzes citation behavior, not the underlying mechanisms. We can observe that Gemini over-indexes on YouTube in Polish queries; we can't definitively say why.

What the data gives us is a clearer map of how the three dominant AI models treat one of the web's most important content platforms, and a foundation for asking sharper questions about AI neutrality in content recommendation.

This is part of our ongoing research into how AI search handles multilingual content. If you want to boost your site’s chances of getting surfaced in LLM answers, try Weglot free for 14 days – no commitment required.

In this article, we're going to look into:

Ready to get started?

The best way to understand the power of Weglot is to see it for yourself. Test it for free and without any engagement.

A demo website is available in your dashboard if you’re not ready to connect your website yet.

Read articles you may also like

Common questions

No items found.

.png)